Member-only story

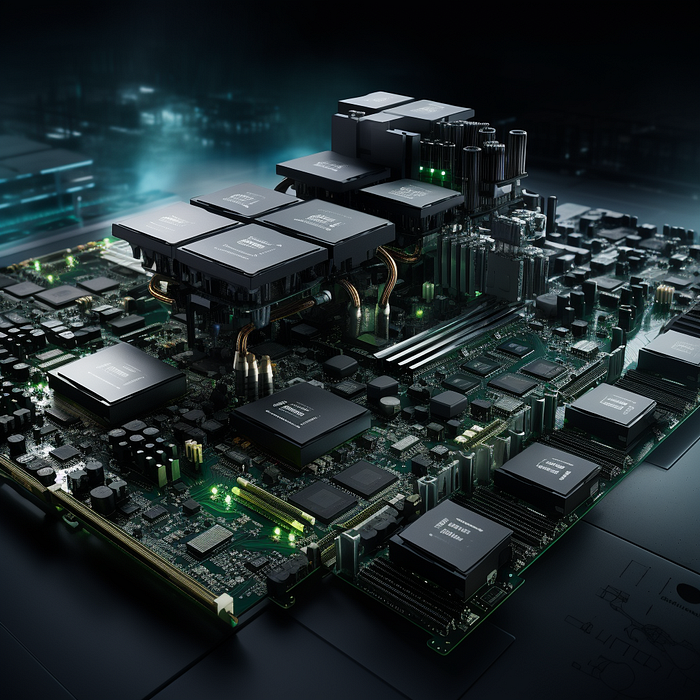

NVIDIA’s DGX-H200 Grace Hopper GPU: A Game-Changer in the GPU Landscape

As artificial intelligence models grow more sophisticated, their computational demands are skyrocketing beyond the capabilities of individual GPUs or multi-GPU platforms. A new class of users including cloud providers, research institutions, and companies on the frontier of AI have a pressing need for systems that can scale to massive datasets and models while preserving simplicity.

The NVIDIA DGX GH200 is architected to meet this need. It leverages the NVIDIA Grace Hopper Superchip to seamlessly link hundreds of GPUs and CPUs into a giant pool of shared memory and processing power. This unified resource pool enables linear scalability without bottlenecks as AI workloads grow.

The GH200 system provides the unique ability to harness massive compute and memory in one platform while retaining a single-GPU programming model. This combination of capabilities allows organizations to develop previously unfeasible giant neural networks, recommenders, simulations, and other bleeding-edge AI applications.

The Grace Hopper GPU is designed specifically for handling massive AI models, with a focus on shared memory and efficiency. The NVIDIA DGX GH200 is a groundbreaking GPU that fully integrates 256 NVIDIA Grace Hopper™ Superchips, culminating in a singular GPU powerhouse. This GPU boasts an impressive 1-Exaflop performance and offers up to 144 terabytes of shared memory. Such vast memory and computational capabilities make it ideal for handling giant terabyte-class AI models. The primary applications that can harness the full potential of the DGX GH200 include massive recommender systems, generative AI, and graph analytics.

What Sets the DGX-H200 Grace Hopper GPU Apart?

- Trillion-Parameter Instrument of AI: The DGX GH200 is a new class of AI supercomputers that seamlessly connects 256 NVIDIA Grace Hopper™ Superchips into a singular GPU. It is tailored to manage terabyte-class models, making it ideal for massive recommender systems, generative AI, and graph analytics. With a whopping 144 terabytes (TB) of shared memory, it ensures linear scalability for colossal AI models.

- Giant Memory for Giant Models: The DGX GH200 stands out as the only AI supercomputer…